The Place to Be

November 29th, 2021 | by Marius Dupuis

(7 min read)

Since the very beginning of our blog, we have been postulating that digital twins be reused to a large extent. Therefore, we see with great pleasure that targeted commercial offerings and marketplaces for trading digital assets are emerging in various formats and with different scope. Time to take a closer look.

Our Domain

The marketplaces that drew our attention in recent weeks and that led to the creation of this post are the following (note: this list is by no means exhaustive and is mostly driven by initiatives that made it to our news bubble recently):

Envited Marketplace (https://envited.market)

- 3d environments for Unreal Engine, Unity, OSGB

- Sample data for LiDAR, RADAR and Camera

- OpenDRIVE data sets

- OpenCRG data sets

- Simulation and data editing tools

ALP.Lab Platform (https://www.alp-lab.at/platform)

- Data by ASFINAG

- Traffic data

- Video

- Track

- Long term – for traffic statistics

- Short term – for traffic control

- Individual vehicles – for traffic control and microscopic modeling

- Environment data (e.g. weather)

- C-ITS Cooperative Awareness Messages

- C-ITS Decentralized Environmental Notification Messages

- C-ITS In-Vehicle Information Messages

- Traffic data

- Data by JOANNEUM RESEARCH

- UHDMaps(R) (highway, rural, urban) (read more about in our ALP.Lab UHDMaps(R) feature)

- Data by ALP.Lab

- Traffic monitoring data (LiDAR / RADAR / optical)

LevelXdata (https://levelxdata.com)

- Real-world trajectories of vehicles and vulnerable road users

- Provided in various categories (urban, rural, …)

- Perception ground truth data for dedicated vehicles

- OpenDRIVE data sets

- 3d environments (3d point clouds)

Safety Pool (https://www.safetypool.ai/database)

- Scenario database (categories: expert knowledge, accidents, naturalistic, …)

- Visualization data for CARLA

IAMTS – International Alliance for Mobility Testing and Standardization (https://iamts.sae-itc.com)

- Autonomous vehicle testing data from registered testbeds

- Advanced mobility testbed database (to be released)

(German) Government data (https://www.govdata.de)

- OpenDRIVE data (“Testfeld A9”)

As noted, this list is by no means an exhaustive collection of data providers or marketplaces. Out of scope in this post are open data portals from public authorities. Feel free to let us know who we missed in terms of the nature and structure of data provided or in terms of the business model. We’ll be happy to hear from you (contact@geonatives.org)!

The Data Sets

Data sets can be split into two groups: static and dynamic data. Static data will typically be models or maps of traffic networks and/or 3d environments (from simplistic representations to highly detailed city models). Traffic networks and maps may well exist without 3d environments whereas the opposite is hardly ever the case.

Dynamic data include but are not limited to time-variant positions and orientations of traffic participants, provided either as raw data or as pre-processed tagged objects with resulting trajectories or even categorized as elements of so-called scenarios. Additionally, weather data and similar may be considered dynamic.

Beyond our scope but, in general, also to be named in the list of mobility data sets are (live) traffic data (example: San Francisco), traffic and traffic demand models (example: Switzerland) and other data by municipalities and other authorities.

As you can see from our list above, the nature and extent of the data sets are rather heterogeneous; but there should be something on offer for most of the potential users. A major concern might be potential inconsistency of data sets. What, for example, if one party offers trajectories but not the underlying road network? This means that you might need to combine data from various sources and you might end up working on data fusion instead of performing your actual task. Therefore, providers deriving various kinds of data from a single source may inherently have an edge over their competitors.

The Parties

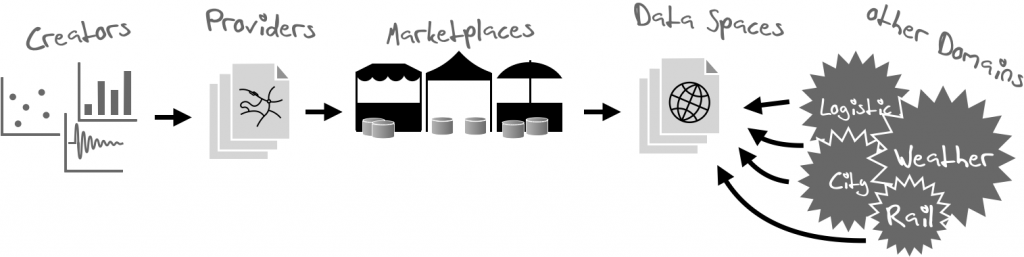

Providers of marketplaces are part of a mobility ecosystem whose stakeholders we have described in a different blog post (see stakeholders of mobility).

Marketplaces are maintained by parties that may – but don’t have to – be the actual providers, or even the creators, of the data packages on offer within them. This leaves some room for discussion whether a specific marketplace may rightfully bear its name or is just to be considered a wrapper around an individual provider’s offer. It might be too early to judge existing marketplaces by this criterion but, of course, we will very much welcome marketplaces that offer data packages from different vendors.

Data Labeling and Quality

Providing data is easy; providing the right data is a challenge – this common principle also applies to data which are on offer in marketplaces. A key issue when looking for data and comparing different offerings is that there does not seem to be a common definition of content classification and the provision of quality indicators for the data that are available within a marketplace. Therefore, it will be hard to know which data are well suited and good enough for a given task.

Several marketplaces provide “scenario data” or “map data”, but it is hard to find a common interpretation of what these labels mean. Here, we see a big gap. Filling it by defining commonly accepted definitions or by postulating a reasonably structured system will be to the benefit of the community. It could be the job of the marketplaces to deliver a normalized quality level statement at least of their delivered data.

The same applies to the quality of the data sets. Some providers (e.g. 3D Mapping Solutions) are rather clear in their definition of data quality and make it easy to grasp it also for the non-experts of data collection by providing abstracted quality level (here: gold, silver and platinum quality categories), others provide no information or they provide precision indicators that might be hard to transfer from one data set to another.

We would highly appreciate if an easy-to-grasp and harmonized system was available for unexperienced users whereas experts could compare data sets along harmonized KPIs. Everything else may just lead to confusion and even a badly guided purchase decision.

An alternative – or more like an extension – might be a tooling that allows users to assess the quality of data sets by themselves. One initiative that is in the process of making such kind of tooling available, is the OpenDRIVE Quality Assurance Framework that was first presented by AUDI at the ASAM OpenDRIVE workshop on Oct. 09, 2018 (see “OpenDRIVE Quality Assurance Framework” or this link). We are eagerly waiting for a commonly available tool set under the hood of ASAM e.V. that helps users of ASAM’s OpenX standards to verify their data sets.

Business Models

The business models of marketplaces don’t seem to follow a clear rule set. Some make data available depending on a level of partnership (whatever it may mean to become a “friend” or a “partner”), some don’t really name their conditions and others will provide data primarily to parties who are willing to provide additional data in return.

Strange enough, we found in more than one marketplace the situation that even receiving more information about available data sets (i.e., not the data sets themselves) requires some kind of registration or “friendship” status.

And in one case, we even found a data set on offer within a marketplace that was otherwise available for free from the originator. A nice hyperlink to this free data set would have been a much better option, and the fact that it is missing might damage the trust which can be put into an offer.

Overall, the little market research we conducted leaves the impression that the maturity of the marketplaces that we found is still in a very early stage. Some offers appear to be more or less “teasers” and seem to aim at getting leads for additional services instead of providing a large pool of data to choose from.

One Level Up: Data Spaces

Is there a way to “deteriorate” this situation? Yes, and it’s named “Data Spaces”. One example that had its debut at the ITS World Congress in Hamburg in 2021, is the so-called “Mobility Data Space”.

Just to be clear: it is, indeed, a good idea to collect offerings across domains in a central place and enable the secure peer-to-peer exchange of mobility data (vehicle data, weather, road works etc.).

But is the provision of the platform alone, i.e. the mere technology skeleton, sufficient? Do you create trust if you keep quiet about the commercial terms underlying the bilateral relationship between data providers and consumers? Or should a minimum framework of terms and conditions be mandatory for all offerings in the data space? As discussions on the exhibition floor showed, there is currently also no plan to provide user guidance on the quality or fitness of offered data or to even make a minimum quality barrier mandatory for listing data in the data space.

If data spaces only aim at bridging the gap between users who need cross-domain data and the different providers, they are solving only part of the problem. In our daily work, we always face the same issues with data:

- unknown accuracy

- unknown feature completeness

- custom formats

- improperly conditioned data in standard formats

- etc.

And we almost always have to pre-process (read: debug) data individually before they can be injected into a processing pipeline and fused with other data.

Having access to yet more data within a data space whose provider doesn’t care about these key issues may only multiply the burden on potential users.

Conclusion

It’s good to see that data are becoming a tradeable asset for a broader public. That’s no question. If we ever want to get mobility going and avoid that everything is surveyed again and again, sharing data is the way to go.

But what we need are a clear terminology for the data types, a clear and standardized indication of data quality and – for a future-proof planning – a clear business model and a sufficiently detailed disclosure of available assets so that the offerings from different vendors can be compared and a clear choice for the best fit can be made.

Finally, everyone aggregating offers should feel responsible for the data on offer. It might even be part of a business model and become a unique selling point to provide only data that has been checked against a list of KPIs that can be matched with various use cases. As analogy, think of a peer-reviewed journal which aims at ensuring a base quality level of scientific publications within a specific domain of research.

Having said that, we are looking forward not only to see a digital twin of reality but a sufficiently defined and attributed digital twin.